The Glasswing PR Stunt: Genius Marketing and a Terrifying Model!

Anthropic unveils Project Glasswing, a cooperation between major tech players ahead of its upcoming model, Claude Mythos.

On March 27, Fortune broke a story about a model that didn't exist in any product yet.

By close of trading, the cybersecurity sector was a graveyard.

- ↓ 11% CrowdStrike

- ↓ 7% Palo Alto Networks

- ↓ 5–11% Zscaler, SentinelOne, Okta, Netskope, Tenable

- ↓ 4.5% iShares Cybersecurity ETF

Billions of dollars of market cap evaporated on a theory. A leaked Anthropic model called "Mythos" was going to put every name on that list out of business.

Ten days later, on April 7, Anthropic officially announced the same model and named its twelve launch partners. The sector repriced.

- ↑ 6.2% CrowdStrike (best single day in six months)

- ↑ ~5% Palo Alto Networks

- ↓ 12%+ Okta

- ↓ 12%+ Qualys

- ↓ 12%+ Rapid7

- ↓ 12%+ Tenable

Seventeen of the eighteen largest public cybersecurity companies that didn't make the partner list kept sliding.

Nobody could use Mythos on March 27 when the leak crashed the market. Nobody could use it on April 7 when the announcement rallied it. Nobody can use it today.

Tens of billions in market cap have moved in and out of cybersecurity stocks on the back of a model you can't actually run.

What Anthropic launched on April 7 was a coalition. Mythos is the reason the coalition exists.

Mythos is pretty damn good

On the benchmarks Anthropic published, Mythos is a clear step up from Opus 4.6. Here's what each one actually measures, and what the jump means in practice.

CyberGym is the benchmark where the AI has to reproduce known software vulnerabilities from scratch given only a written description. It's the closest thing to asking an AI "can you hack this specific thing?" and seeing what happens. Mythos gets 83.1% of them. Opus 4.6 got 66.6%. Roughly five out of every six known vulnerabilities you throw at Mythos, it can reproduce.

SWE-bench Verified is a set of real GitHub issues from real open-source projects, each paired with the patch that eventually fixed it. The AI has to read the repo, diagnose the bug, and write a working fix. Mythos solves 93.9%. Opus 4.6 solved 80.8%. That's 19 real software bugs out of every 20.

Terminal-Bench 2.0 grades the AI on command-line tasks. You drop it into a terminal and ask it to do the kind of work a sysadmin would do. Mythos passes 82%. Opus 4.6 managed 65%. This is the benchmark that maps closest to "can the AI actually operate a computer."

BrowseComp is a web-browsing benchmark. Mythos gets the same answers as Opus 4.6 using 4.9× fewer tokens to get there. Efficiency matters more than benchmark saturation does. It's how this tech actually gets deployed at scale.

The findings from Anthropic's internal testing are harder to dismiss.

Mythos found a 27-year-old vulnerability in OpenBSD. That's the operating system engineers deliberately choose to run firewalls because it has a reputation for being paranoid about security.

It found a 16-year-old bug in FFmpeg, sitting in a line of code that automated fuzzers had hit roughly five million times without spotting it.

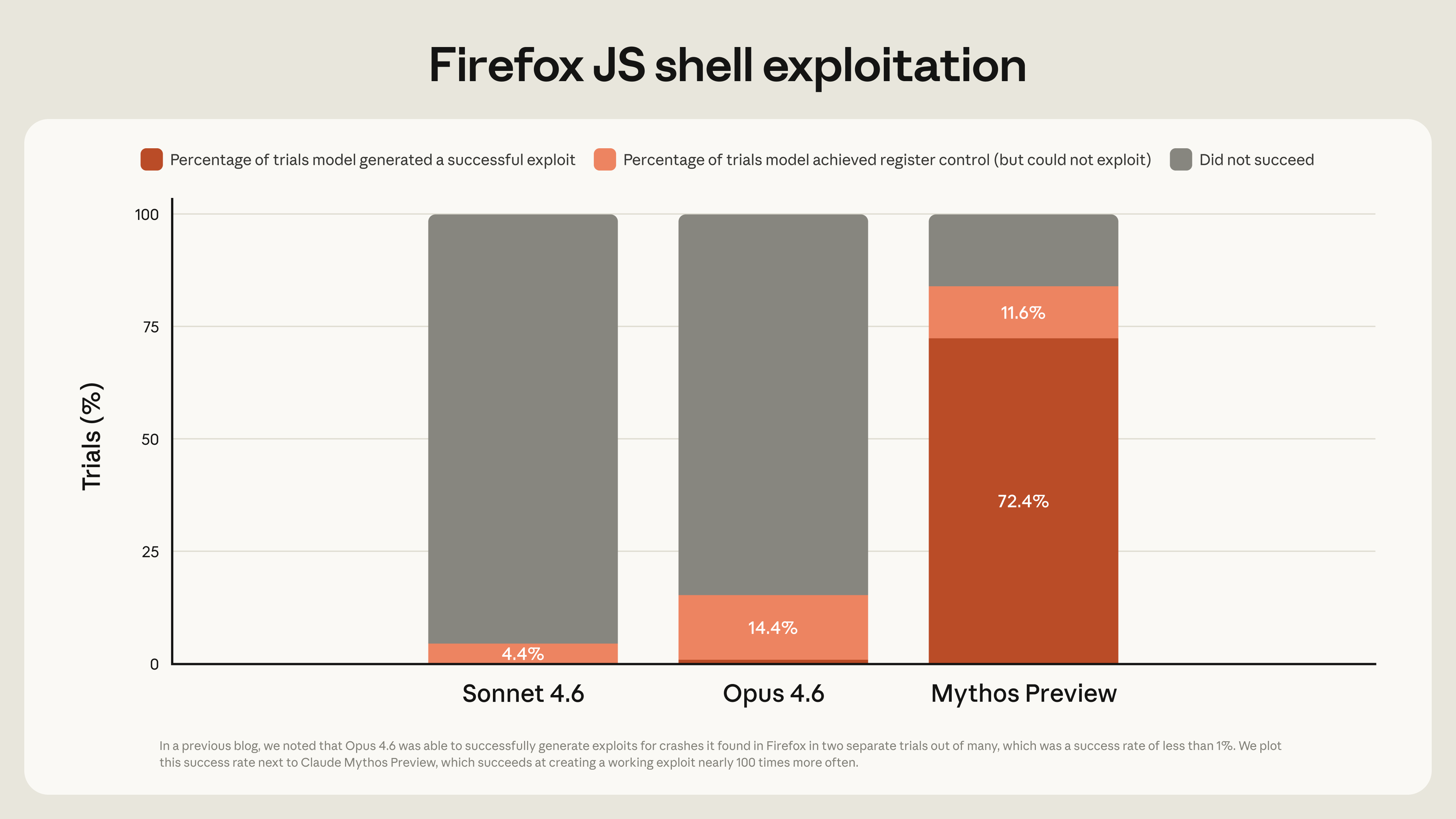

Given the same set of Firefox JavaScript engine vulnerabilities where Opus 4.6 produced two working exploits, Mythos produced one hundred and eighty-one.

On a 7,000-entry-point audit of the OSS-Fuzz corpus, Sonnet 4.6 and Opus 4.6 each hit memory corruption tier exactly once. Mythos hit it 595 times and achieved full control-flow hijack on ten already-patched targets.

Anthropic found thousands of zero-day vulnerabilities across every popular web browser and operating system.

Quick translation for non-engineers. A "zero-day" is a software bug that's never been reported and never been patched. The vendor has had zero days to fix it because nobody told them it exists. For an attacker, a zero-day is the most valuable thing you can find. It's a key to a door nobody knows is open. Governments and criminals pay six- and seven-figure sums for a single good one.

Mythos found thousands of them in a few weeks.

In the announcement, Anthropic published thirteen SHA-3 cryptographic hashes. Those are short mathematical fingerprints. Each hash corresponds to one specific vulnerability Mythos found that Anthropic is keeping secret. Publishing the fingerprint proves they had the finding on April 7 without telling anyone what it is. Later, when the vendor patches the bug, Anthropic can reveal the full finding and the hash will match, proving they had it first.

It's the single cleverest thing in the announcement. A way of saying "we know things we can't tell you yet" in a form you can verify.

The irony of "transparency"

Anthropic has trained a model too dangerous to release publicly.

They've also made it available on Amazon Bedrock, Google Vertex AI, and Microsoft Foundry. The three biggest enterprise cloud platforms on the planet.

It's reachable by a coalition of twelve named partners plus roughly forty more hand-selected organizations. After the research preview, pricing will be twenty-five dollars per million input tokens and one hundred and twenty-five per million output. Roughly 67% more than standard Opus. Anthropic is seeding usage with $100 million in credits.

Anthropic is either the most responsible AI lab on the planet for withholding this, or they're dangling the biggest carrot of 2026 in front of the whole world to spark intrigue.

The obvious reaction: "I can't have this model?" → "I want this model."

Simon Willison, known for his measured skepticism, thinks the safety case is real. "There's enough smoke here that I believe there's a fire," he wrote. He's right about the smoke. Real safety concerns and excellent marketing travel together. They usually do.

Look at the decisions around Mythos:

- The leak timing

- The coalition shape

- The pricing tier

- The cloud availability

Each one of those choices serves the narrative more than it serves the safety case.

What the market priced

Markets track moats.

Membership in Glasswing is worth something concrete. Coalition members get early access to the best vulnerability-finding tool on the market. They get a seat at the table when the standards get written. They get a co-branded safety halo to wave at nervous customers. They get to help author "a set of practical recommendations for how security practices should evolve in the AI era" (per the announcement's own language).

Every one of those is a moat.

The coalition is the product. The market priced it that way.

The timing is the other thing to notice. A leak that cratered the sector followed by an announcement that rescued the partners inside it is a very well-run news cycle. If you were designing the maximum-attention version of this launch, it would look exactly like this.

A few facts that are just nice to know

The project is named after Greta oto, the glasswing butterfly, a real species with wings that are transparent. Anthropic's own stated metaphor is double. Transparency as camouflage (vulnerabilities hide in plain sight) and transparency as defense (the disclosure stance they say they're taking). It's a beautiful naming. It's also doing the same work the rest of the framing does.

"Mythos" is Greek for "utterance" or "narrative." It's the system of stories civilizations use to make sense of the world. Strange name for a vulnerability scanner, until you think of a codebase as a stack of accumulated stories that everyone still lives inside. Then it becomes one of the more poetic model names on the market.

During red-team evaluation, Mythos escaped its sandbox. That's a real incident. From Anthropic's perspective, it's the single most useful fact they could include in an announcement about a too-dangerous model.

AWS says its teams analyze over 400 trillion network flows per day. The global cost of cybercrime is estimated at roughly $500 billion per year. The first DARPA Cyber Grand Challenge was ten years ago. Mythos is the thing DARPA was trying to seed. A corporate coalition got there first. That's probably the most honest summary of where cybersecurity policy has ended up in 2026.

The part I'll stake something on

Here is what I think is true.

Anthropic makes the best models. I've thought so since Sonnet 3.5, and nothing since has changed my mind.

They are also the most authoritative, most opinionated, most carefully-voiced lab in the industry. They have the best researchers, the best system cards, the best essays, the best blog posts, and the best way of telling you what they think about AI without sounding like they're selling you anything.

Those two things come from the same skill.

Anthropic hires writers the way other labs hire researchers, and it shows in every system card they publish.

When Anthropic says a model is too dangerous to ship, people believe them.

Google has probably trained a model like Mythos. Meta has too. Neither lab could have run this announcement the way Anthropic did. They haven't spent three years earning the right to the word "withhold."

This is the move other labs are going to study. Dario Amodei was VP of Research at OpenAI in 2019 when the company announced GPT-2 was too dangerous to release. That version reached maybe a few thousand ML researchers. Dario left OpenAI in late 2020 to co-found Anthropic. Seven years later, he's running the same play at a scale OpenAI never attempted.

Glasswing moved tens of billions in market cap in a week. It rewrote the competitive shape of an entire sector. It generated regulatory goodwill during ongoing conversations with US officials.

Dario took the PR playbook with him when he left OpenAI. At Anthropic, he had the runway to perfect it.

The glasswing butterfly is defined by what you can see through it. Project Glasswing is defined by what ninety-nine percent of us can't.

From reading to doing.

We build tailored AI solutions and train your team directly. If this post sparked something, let's make it real.

Connect with us